Give ChatGPT a different test!

“ChatGPT Outperformed Medical Students on USMLE,” “AI Passes Radiology Board Exam.”

We all remember when these types of headlines started popping up two years ago.

Everyone started asking what this meant for medical education and whether clinicians should worry about their jobs like creatives and administrative workers were beginning to.

If you truly understand how generative AI works, however, you have known to be skeptical of what these headlines imply.

- After all, multiple-choice test scores do not accurately predict real-world performance. This is true both for teenagers applying to universities and for AI.

- For humans, test-taking is a skill in itself, developed over weeks and months of preparing for exam-specific scenarios.

- For AI, it’s a little different. The reason LLMs can so easily outperform us on standardized tests is that their entire function depends on probabilities of word association.

But a physician doesn’t prepare to treat patients by memorizing which groups of words are likely to go together, after all. They train on real patients. This is why, while we keep hearing of AI’s outstanding test performance, it doesn’t quite measure up in real-world care scenarios.

- In fact, when researchers built MedAlign, tool for comparing AI’s performance on real-world physician queries compared to humans, AI had a 35 percent error rate.

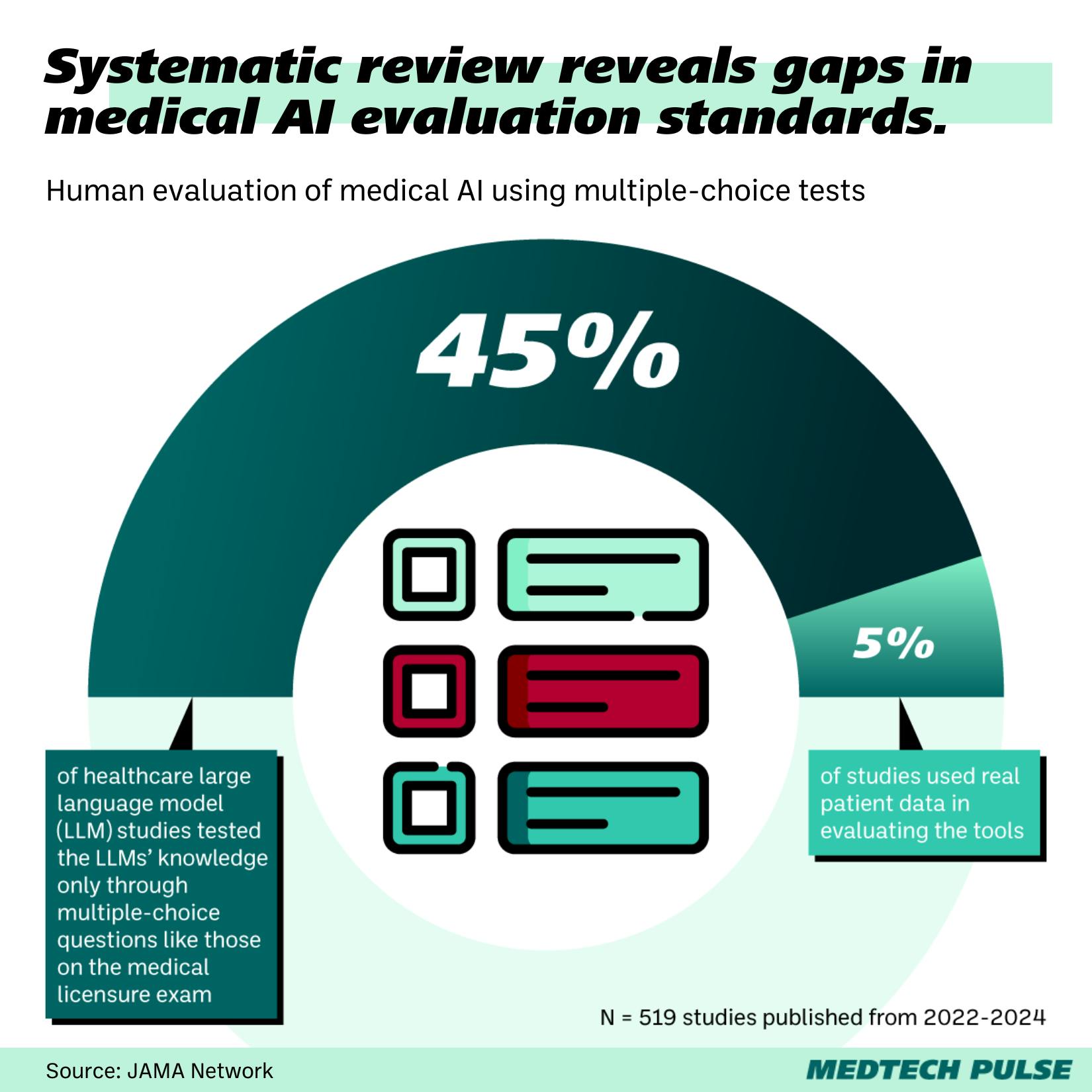

Yet, a major portion of studies evaluating LLMs’ healthcare potential depends on exactly this kind of multiple-choice test. A systematic review found that only 5 percent of such studies use real patient data to evaluate the AI tools.

Findings like this are leading to more and more calls to ditch this multiple-choice testing standard, most recently in an NEJM AI editorial with a delightful title: “It’s Time to Bench the Medical Exam Benchmark.”

So, how can we better evaluate LLMs’ performance on specific healthcare tasks?

It starts with admitting one model can’t (and probably shouldn’t) be tasked with everything. Then, it’s about training specialized tools on real-world scenarios and matching them with the right-fit use cases.

Stanford researchers are creating a tool doing just that, offering health systems and AI developers a way to compare and contrast models best suited to different medical tasks.

I’m excited to read about the kind of work that helps make medtech more human—and thus more deserving of a place in our medical toolkits. I’ll keep bringing you more of these stories in this newsletter, and together, we can cheer on the medtech pioneers building the more effective AI tools we all deserve.